I’d like to share an app that I’ve made that helps with planning a sequence through the night. I’ve seen many great apps that show the altitude of an object throughout night where you can check when your potential target gets too low. However, I haven’t seen one that combines all the targets on your list to give better context about when you might want to switch between the two. Prior to writing this, I’d constantly toggle between different targets in SkySafari or even Telescopius to get the altitude of my potential targets at a certain time.

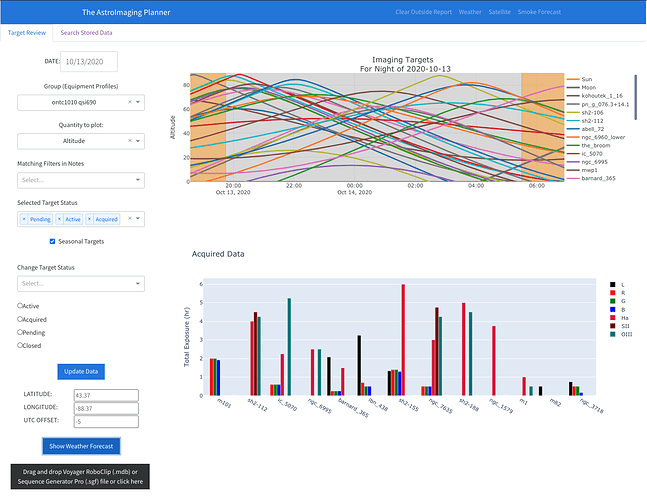

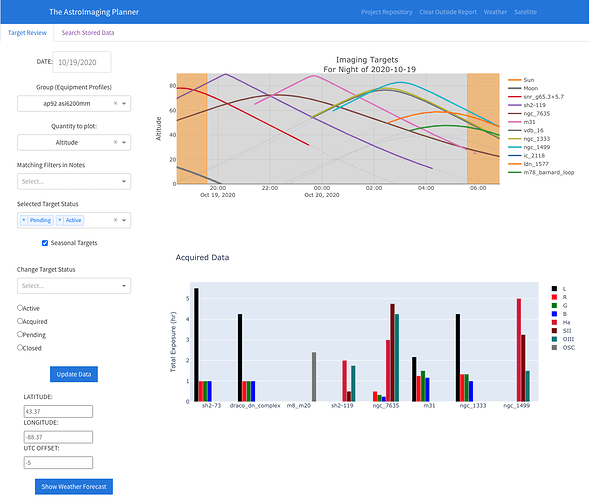

Instead, I created a web-app that runs locally and pulls in all the targets from Voyager’s Roboclip database and displays their altitude over the course of a night. It includes the Moon’s position as well so you can plan narrowband vs. LRGB imaging around it. Here’s a view of it:

The top chart shows the altitude of each target (and the Moon) over the course of the night, with astronomical dawn/dusk marked by the orange bands. Each curve can be easily hidden. I usually switch from one target to another when their altitude lines cross to keep getting good quality data. All this is calculated on the fly. I have the RA/DEC for each target, and with the geo-location and time, I can calculate the Alt/Az with some python modules. The bottom chart is a summary of data collected so far.

On the left are settings to change the date in case you are planning weeks, etc. in advance of a trip. There’s a dropdown for selecting the equipment profiles, which I have used the Group field in the RoboClip database. There are also dropdown to select targets by matching filters that I stored in the notes section of the roboclip entry.

I’ve also added an option of tracking the target’s status as well. Right now, I just have four:

* Pending - targets I want to image, but haven’t started yet

* Active - this target is actively being imaged this season

* Acquired - this is when I think I have enough data to start processing it

* Closed - I’ve processed and shared this target

These status fields can be filtered as well for the two charts on the right.

There’s also various weather tools like the local forecast using clearoutside, NWS, and GOES satellite links and more relevant lately - a smoke forecast.

The weather here is pretty unpredictable, so this has really helped me coordinate and streamline efforts across 2-3 rigs I setup every night.

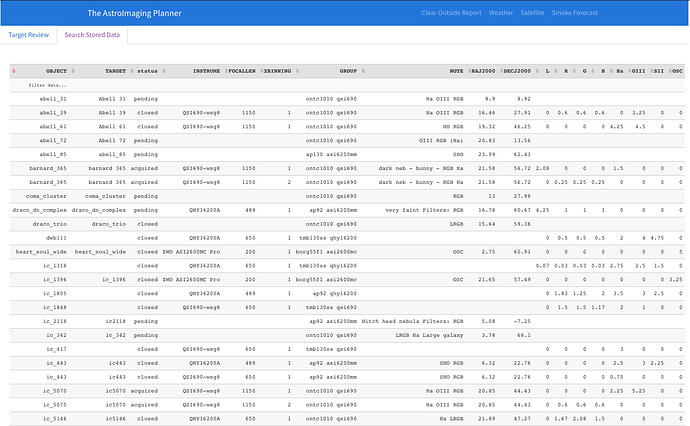

I also have another tab which shows the targets with gear I want to use to capture it, the target status (pending/active/acquired/closed), and the exposure summaries. Here’s a view of that:

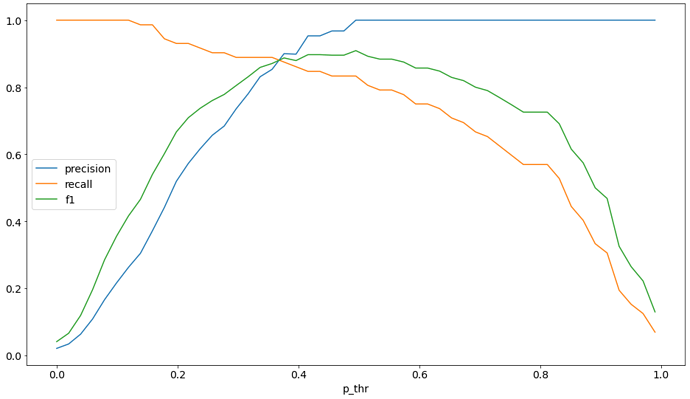

I also have in development a contrast view, which takes into account the bandwidth of filters and the SNR with certain levels of LP, whether it be artificial light from the city, or natural light from the Moon. This also can be extended to include the effect of aerosols in the atmosphere like the smoke we’ve been getting over much of the US this year.

My plan is to eventually open source this project so if others want to contribute, they can. For me right now, it’s a pet project, and one that’s helped me keep my sessions straight. With this, I’ve managed to image a lot more this year compared to prior years with 2x more total exposure than my previous best year.

If you have any questions/comments/suggestions, let me know!

Gabe