Stay tuned … !

Very interesting! Interested to see if this is intended for one session, or for multiple sessions of Voyager, in which case the sequence file will need to be written to disk after each image is captured

YES!!!

I am running a python script to inspect the fits created by Voyager and manually doing some load balancing. An integrated solution would be fantastic.

Cheers,

José

FWIW: You could achieve persistent status if you scan the fits files written to disk and update the tallies accordingly.

This way you don’t have to ever touch the session file, you simply inspect the images and delete the files that doesn’t pass your quality standard or you can add an external process to check the data quality after each run and automatically purge the files.

Cheers,

Jose

So excited for this to be implemented! I’ve been implementing a solution similar to what @Jose_Mtanous mentioned using python to keep track and adjust my sequences. Awesome work Leo!

Gabe

Thanks for the reply, Jose.

I have been thinking along the same lines as you suggest. It seems several others have already taken this approach.

The holy grail for me is that automating the quality assessment of an image. For other processes and scripts Ive been using Python. Have you got your quality assessment automated? I’d be interested to what approach you took if you have

cheers

Nick

This will be awesome Leo. Can’t wait!

Leigh.

This is really excellent, thank you Leo.

Hi Nick,

I have 2 capture rigs in my observatory, the capture folders are synchronized to my main computer using Syncthing. After a session I visually inspect the images and delete whatever is not good enough. The inspection happens on my main computer and it automatically syncs back to the observatory computers. One nice feature about Syncthing is that you don’t need internet to use it as long as the computers are in the same subnet it just works, so it works even if I am in the field.

Next night before imaging I run my python script it inspect the capture folders, rebuilds the tallies per target per filter and updates a google spread sheet, then I adjust the dragscript/sequences accordingly to rebalance the targets.

I haven’t automate the frame rejection because I haven’t found a reliable way to do it. At one point in time I tried to use PI frame selector but it always gave some false positives/negatives. I am keeping all the rejected frames hoping that one day I can use them to train a machine learning tool to automate the rejection process. To be honest the rejection process is very fast and usually I learn something about the capture process that potentially could be use to fine tune it.

Cheers,

José

I am so excited about this, Leo. I have been hoping for this functionality for a long time. Thanks for making it happen.

Kind regards,

Glenn

Since you’re using python, you can use astropy and photutils to measure the characteristics of your images. I have some working versions of this that I plan to add to a cron job (or airflow) at some point that will evaluate incoming frames as needed and write them to a db that will be surfaced on a web app. That can give a summary of the prior night’s runs and flag potentially bad files.

I had run into this issue lately with one sequence I saved that was based off an LRGB sequence where I focused using the L since they were all parfocal. I used this sequence for a target requiring Ha and OIII, so focusing on L made my stars a bit out of focus. I would’ve caught this early and not wasted several nights

Hi Jose

that must be a sweet setup

I think you’re right, at the end of the day, each and every image needs to be eye-balled!

cheers

Nick

Thanks Gabe, I’ve been playing with astropy and photutils but Ive found it heavy going!

cheers

Nick

This looks great! Feels like a leap toward Voyager Advanced. This is the single thing I’ve been most missing and I’m excited for it! Thanks!!

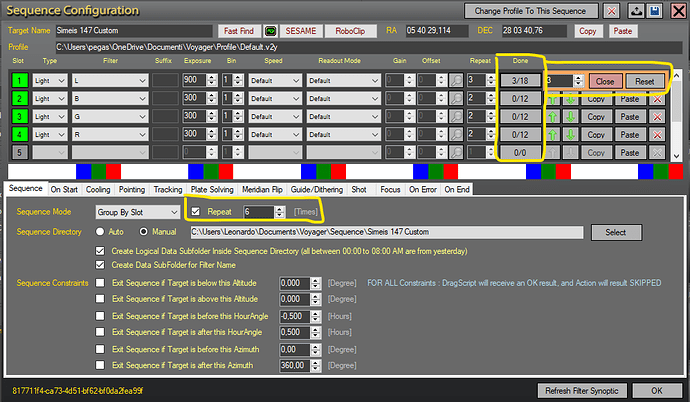

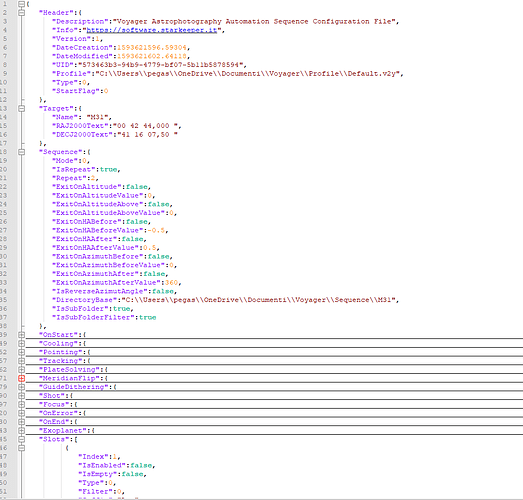

Next Daily build, to allow us manage the shot memory, we will change sequence file format, we switch to JSON file format. So i’m sure someone will be happy about

You will find a double open button for old sequence and new sequence format, you are allowed only to save in new format.

Leo, this is really nice.

What would happen if you manage the sequence using dragscript? For instance sometimes I use a generic RGB sequence to capture multiple targets, the sequence doesn’t have an info of the target, the target info is specified in Dragscript. Are you planning to support sequence memory in Dragscript? Or do we need to create a sequence per target and avoid generic sequences?

Thanks,

José

Memory is for sequence, Dragscript just run the sequence, you will have a flag in sequence to say use memory or not. If you use memory and sequence is finished the sequence will be skipped.

So depends on you what to do.

That’s a great feature !! Being a mobile astrophotographer I rarely do all the shoots in one night so I have to manually set the remaining number of frames by hand the next night.

One feature that could be great is to keep the infos for the unchecked slot when saving the sequence.

Cheers !!

This makes me VERY happy Leo! The JSON is perfect for creating a modified sequence file in my external scheduler script and then running it in DragScript. I think this should allow me to achieve my goal of a zero-update (no editing) DragScript plus a scheduler that runs from a database, so the only changes for nightly runs would be to the database.

I can use the Web Dashboard’s excellent framing utility to populate the RoboClip database with my projects / targets, and then read that database in my scheduler script to choose the target to run next.

Will the sequence progress counter be stored in the RoboClip database or somewhere else?

Cheers,

Rowland

Progress counter will be in a database or local file